Artificial Intelligence is concerned with creating computers or machines as intelligent as human beings. It is certainly one of the most important inventions of mankind because it combines important new-age technologies and will accelerate them. It can also help us to solve the greatest challenges of our time.

Already today, algorithms using AI are very much integrated into our everyday life. Calculation with Google Maps, in conversations with Alexa/Siri, or our personalized Youtube or Netflix recommendations all these features are enabled using AI.

AI technology is a crucial anchor of much of the digital transformation taking place today as organizations position themselves to capitalize on the ever-growing amount of data being generated and collected.

So how has this change come about? One of the main reason is the Big Data revolution itself since mainstream society merged itself with the digital world. This availability of large and ever-growing data has led to huge research into ways it can be processed, analyzed, and acted upon. Since Machines are better suited than humans to this work, the focus was on training machines to do this in as “smart” way as is possible.

“The rise of powerful AI will be either the best or the worst thing, ever to happen to humanity.” — Stephen Hawking

What is Artificial Intelligence

The concept of what defines AI has changed over time, but at the core, there has always been the idea of making a computer, a computer-controlled robot, or software think intelligently, in the similar manner the intelligent humans think.

Accomplishing AI takes studying how the human brain thinks, and how humans learn, decide, and work while trying to solve a problem, and then using the outcomes of this study as a basis for developing intelligent machines and applications.

After all, human beings have proven uniquely capable of understanding the world around us and using this information to make the right changes. If we want to create machines to help us do this more efficiently, then it makes sense to use ourselves as a blueprint.

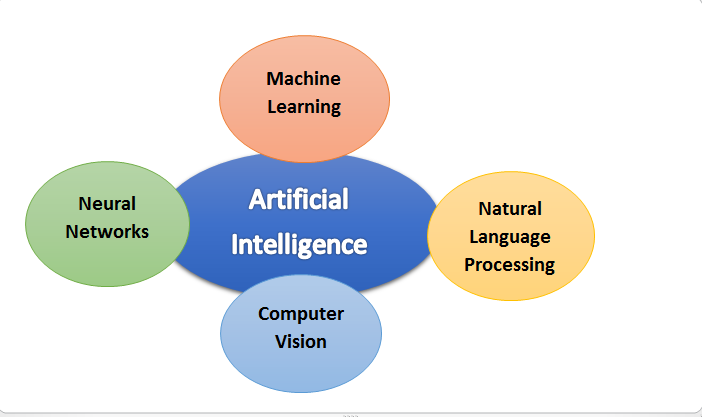

What are the different Domains of Artificial Intelligence?

Machine Learning

Machine learning is a method of data analysis that enables automation in analytical model building. It is a branch of artificial intelligence based on the idea that systems can learn from data, information as well as experience, identify patterns and make decisions with minimal human intervention.

Machine learning is used in search engines, email filters to sort out spam, making personalized recommendations by websites, banking software to detect suspicious transactions, and lots of apps on our phones such as voice recognition.

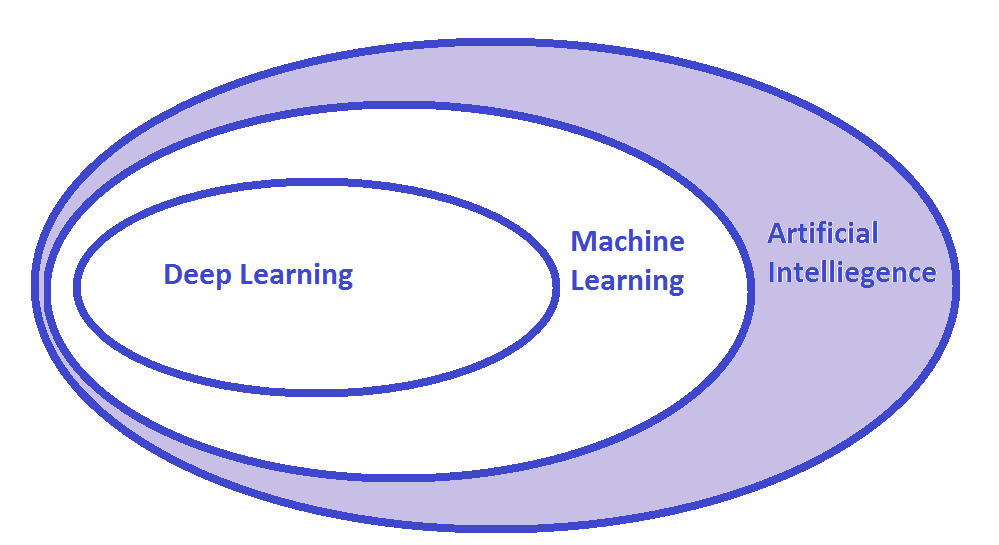

Deep Learning

Deep learning is a subset of machine learning in artificial intelligence that consists of networks capable of learning unsupervised from data that is unstructured and unlabeled. Deep Learning is also known as deep neural learning or deep neural network.

Deep learning is becoming popular because its models are capable of achieving state-of-the-art accuracy. These models are trained using large data sets along with neural network architectures.

Following are the examples of Deep Learning Applications

- Automated driving to medical devices.

- Automated Driving: Automotive researchers are using deep learning to automatically detect objects such as stop signs and traffic lights.

- Deep learning is used to detect pedestrians, which helps decrease accidents.

Natural Language Processing

Natural language processing (NLP) is the ability of a computer program to understand human language as it is spoken and written — referred to as natural language. It is a component of artificial intelligence. NLP has been there for more than 50 years and has roots in the field of linguistics.

NLP makes it possible to interact with a computer that understands the natural language spoken by humans. Google Translate, Alexa, Siri are some of the applications based on Natural Language Processing.

Computer Vision

Computer vision is a field of artificial intelligence that trains computers to gain high-level understanding from digital images or videos. From the perspective of engineering. Using digital images from cameras and videos and deep learning models, machines can understand and automate tasks that the human visual system can do.

There are several applications of Computer Vision today, and the future holds a huge scope.

- Facial Recognition for surveillance and security systems

- Retail stores also use computer vision for tracking inventory and customers

- Autonomous Vehicles

- Computer Vision in medicine is used for diagnosing diseases

- Financial Institutions use computer vision to prevent fraud, allow mobile deposits, and display information visually

Neural Network:

An artificial neural network (ANN) is the component of artificial intelligence that is trying to simulate the working of a human brain.

A neural network is created using many perceptron layers, that’s why it has the name ‘multi-layer perceptron’. These neurons receive information in the set of inputs. Combining these numerical inputs with a bias and a group of weights produces a single output.

Following are some Applications of Neural network

- Text-to-speech conversion, Text Classification, and Categorization information extraction, and so on.

- Question Answering systems automatically answer different types of questions asked in natural languages

- Automated writing of reports, generating texts based on analysis of retail sales data, summarizing electronic medical records, producing textual weather forecasts from weather data, and even producing jokes.

What are the Limitations and Challenges of AI?

When you look at the brighter side of a thing, you should acknowledge that there is a darker side to it. Like any other invention AI also comes with its own set of problems. It also has some cons that we can’t ignore. So, let us look at some of the major challanges of AI implementation.

- Lack of Creativity: Although AI can help you in designing and creating something special, it still can’t compete with the human brain. Their creativity is limited to the creative ability of the person who programs and commands them.

- Privacy and Security: AI often requires “learning” which can involve massive amounts of data, calling into question the availability of the right kind of data, and highlighting the need for categorization and issues of privacy and security around such data.

- Biased Processing of Data: If there is bias in the data that is inputted into an AI, this bias is likely to carry over to the results generated by the AI.

- Judging and Decision Making: Machines can’t judge what is right or what is wrong because they are incapable of understanding the concept of ethics or legality. They are programmed for certain situations and as such can’t take decisions in cases where they encounter an unfamiliar (not programmed for) situation. However constant improvements are being made in this area.

- Lack of Ability to Learn from Experience: One of the most amazing characteristics of human cognitive power is its ability to develop with age and experience. However, the same can’t be said about AIs as they are machines that can’t improve with experience, rather it starts to wear and tear with time. With machine learning, this aspect of AI is improving with time.

- High Cost of Implementation: Setting up AI-based machines, computers, etc. needs huge costs given the complexity of engineering that goes into building one. Further, the astronomical expense doesn’t stop there as repair and maintenance are also very expensive.

Future of AI: Will AI Replace Human in Workforce?

As humans, we have always been amused by technological changes and fiction, right now, we are living amidst the greatest advancements in our history. Artificial Intelligence is now seen as the next big thing in the field of technology.

In reality, AI is already at work all around us, making an impact on everything from our search results to our online dating, to the way we shop. Survey data shows that the use of AI in many sectors of business has grown by 270% in the last four years.

Given how artificial intelligence has been portrayed in the media, in particular in some of our favorite sci-fi movies, it’s clear that the growth of this technology has created fear that AI will one day replace in the workforce.

A recently published paper by the MIT Task Force on the Work of the Future titled “Artificial Intelligence And The Future of Work,” looked closely at developments in AI and their relation to the world of work. The paper shows a more optimistic picture.

The MIT CCI paper suggests that we are a long way from reaching a point in which AI is comparable to human intelligence, and could theoretically replace human workers entirely.

Rather than increasing the obsolescence of human labor, the paper predicts that AI will continue to drive massive innovation that will fuel many existing industries and could have the potential to create many new sectors for growth, ultimately leading to the creation of more jobs.

Some respected Scientists like Stephen Hawking have shown concerns that the development of intelligence that equals or exceeds our own but has the capacity to work at far higher speeds, could have negative implications for the future of humanity or at least is going to create profound changes in ways our society functions.

This concern has led to the foundation last year, by a number of tech giants including Google, Microsoft, IBM, Facebook, and Amazon, of the Partnership in AI. This group will research and promote ethical implementations of AI, and to set guidelines for future research and deployment of robots and AI.